"Just use ChatGPT.

It's basically the same thing."

Tabrez Alam

April 15, 2026 · Founder, eety.ai

Tabrez Alam

April 15, 2026 · Founder, eety.ai

"Just use ChatGPT. It's basically the same thing."

The man who said this to me had twenty-two years of patent practice behind him. He was not being lazy; he was being experienced. He had tried the specialised tools. Evaluated demos. Sat through pitch calls from people like me. And arrived at the kind of conclusion that only forms after two decades of watching software promises arrive with fanfare and leave quietly: paste the disclosure in, get the draft, spend an hour cleaning it up, send it to the client.

Same output quality. Two hours saved. Why pay for something extra?

I did not argue. I smiled, changed the subject, and then went home and did exactly what he said.

Because if he was right, I needed to know. And if he was wrong… well, I needed to know that too.

I took a real patent disclosure. Not a toy example, not a clean hypothetical, but an actual engineering brief from an actual inventor. The kind that arrives in your inbox with three PDFs attached, one of which is titled "FINAL_v3_ACTUALLY_FINAL.pdf."

I opened a fresh ChatGPT window. Pasted in the disclosure. Asked for independent claims.

They were… good. Genuinely, suspiciously good. The kind of good that makes you lean back and think: huh, maybe this is fine. The claims were structured correctly, the language was appropriately broad in the right places, there was a logical hierarchy. I was, honestly, impressed.

Then I asked for the detailed description.

The model wrote one. It was fluent, technically sophisticated, well-ordered, and it contained exactly one invention element that did not appear anywhere in the original disclosure. I am going to be very specific about that.

A specific technical detail. Invented. Confidently. Complete with an explanation of how it "integrates seamlessly with the other system components."

Not because I was careless. Because the prose around it was so convincingly written that my brain, trained by years of reading technical text, filled in the gap automatically.

The model had written something that sounded like something the inventor would have said. Which is a completely different thing from something the inventor actually said.

And that, right there, is the beginning of a very expensive problem. Not expensive in a "this sentence needs rewriting" sense. Expensive in a "you have now introduced an element into the record that the inventor did not disclose, did not invent, and cannot explain under oath" sense.

The attorney I had dinner with was saving two hours. He was also, without knowing it, occasionally introducing ghosts into his patent applications. Whether those ghosts ever came back to haunt him is a different story… but they were there. :)

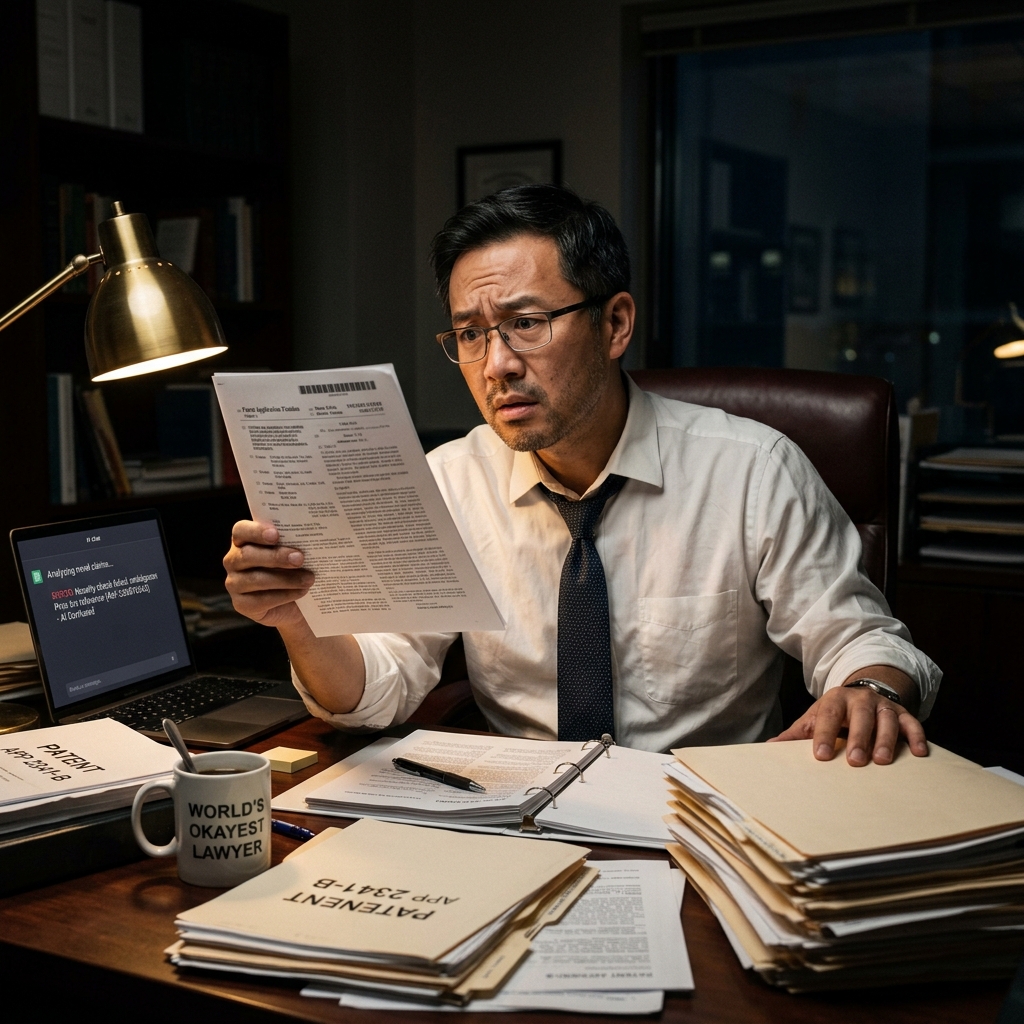

This is not a made-up example. This is Tuesday at 11pm. :)

There is a word for what a general LLM does with a patent disclosure. The word is WebMD.

Here is the thing about WebMD. It is not wrong. The conditions it lists are medically accurate. The symptoms it describes are real. The information comes from legitimate sources. And yet… every doctor on the planet has had a patient walk in having spent forty-five minutes on WebMD, absolutely convinced they have a tropical disease that has not been seen in this country since 1987.

WebMD does not know you. It does not know your history. It does not know what your doctor ruled out last Tuesday. It presents everything it knows with exactly the same level of confidence, regardless of whether it applies to your specific situation. It will tell you "possible causes include X, Y, and Z"; and Z will be the one that sounds the most dramatic, which means Z is the one you will focus on for the next three days. :(

A general LLM works the same way with your patent disclosure. It knows an enormous amount about patents. It has seen more patent language than any attorney alive. But it does not know your invention. It does not know what the inventor told you verbally that is not in the brief. It does not know the prosecution history that makes a certain claim framing dangerous. It does not know that your client's competitor already filed something suspiciously similar in the EPO eighteen months ago.

So it writes. Fluently, confidently, and sometimes from a parallel universe.

The difference between knowing about patents and understanding this patent is exactly the gap that gets you into trouble.

And in patent law, the gap between those two things is measured not in quality of prose, but in validity of a granted claim. Which is a different kind of expensive.

Graduated top of class. 3.9 GPA. Read every patent textbook, MPEP cover to cover, twice. Can cite chapter and verse on claim differentiation, means-plus-function elements, and written description doctrine. Absolute unit of legal knowledge.

Sat down to draft her first independent claim… and wrote something that would have made a USPTO examiner very happy to issue a 112 rejection. Not because she did not know the law. Because she did not know this client, this technology, this firm's drafting style, and this attorney's preference for three levels of fallback claims.

BRILLIANT INTERN would improve dramatically with context. Give her the prosecution history, the reference filings, the inventor's three-hour interview notes, the prior art you are designing around, the scope decisions you have already made in your head before typing word one… and she becomes genuinely excellent.

The question is: who provides all that context? And in what format? And how does the document remember it across a 60-page application? And how do you check that it was actually used, and not hallucinated around?

This, specifically, is what patent drafting software is supposed to solve. Not the writing. The scaffolding around the writing.

Knows everything about patent law.

Knows nothing about your case. Yet.

You paste in the disclosure. You get a clean summary. "Wow, this actually understands the invention." You feel optimistic.

Claims are generated. They look structurally correct, nicely broad, properly dependent. You copy them into a Word doc and send a screenshot to a friend. "AI is going to replace patent attorneys lol"

You ask for the specification. The model delivers 3,000 words. Most of it is excellent. Some of it refers to "Figure 3" which does not exist yet. Reference numerals begin to drift. The embodiments start to repeat themselves with slightly different wording.

You paste in a prior art reference. The model adjusts, but now the claims are slightly different from the spec. You ask it to reconcile them. It writes new claims. The new claims use different terminology from the spec. The context window is getting restless.

You ask why claim 3 refers to "the processor" when there is no antecedent basis. The model agrees and fixes it. Now "the processor" changes in claims 3–7 but not in the spec. And somewhere in page 8 there is a technical detail the inventor never mentioned. You are now editing, not drafting. The two hours your senior partner claimed he saved? You are spending them. :)

Here is the thing that gets lost when we compare "ChatGPT vs eety.ai" or "Gemini vs DeepIP." The framing suggests that the difference is text quality. That one produces better prose than the other.

That is not the difference. The difference is in what problem each one is actually solving.

A general LLM is solving a text generation problem. Given a prompt, produce plausible, fluent text. It is extraordinary at this. The text it produces for patent purposes is, genuinely, often quite good.

Patent drafting, however, is not a text generation problem. It is at least six different problems at once, stacked on top of each other, and all of them need to be solved before the text is even considered:

This is the line I keep coming back to whenever someone asks me to explain what eety.ai is. Or DeepIP. Or Solve Intelligence. None of us invented the underlying model. We built the architecture around it.

DeepIP positions itself as the broadest platform: not just drafting, but the entire patent operation. Patentability analysis, drawings, prosecution, FTO, portfolio intelligence, all of it.

Its real differentiator is Word-native workflow. It comes to where most attorneys already live, rather than asking them to migrate. Style matching, firm templates, jurisdictional conventions; all handled inside the tools you already use.

If your bottleneck is "I need AI everywhere across my entire patent practice," DeepIP appears to have thought about that question very carefully.

Solve describes itself as a copilot, a word that implies "I work alongside you," not "I replace you." 400+ IP teams use it. Its emphasis is on interactive drafting with the attorney in the driving seat at every step.

What stands out in Solve's positioning is something I find genuinely important: they explicitly talk about separating AI-assisted suggestions from inventor-provided disclosure. That is not a UX feature. That is a compliance feature.

Multi-jurisdictional support, prosecution workflows, claim charts… Solve covers a wide canvas with a browser-native approach that removes the Word dependency.

I will be honest about the bias here and then tell you what I actually believe. eety.ai is making a more opinionated bet than either of the above: that the bottleneck in patent drafting is not writing speed; it is invention understanding.

A 15-dimension understanding model. Iterative gap-finding questions. Prior art integrated into comprehension, not just citation. A draft plan you approve before text is generated. Style DNA extracted from reference filings. Passage-level review annotations. Audit trail.

The thesis is: understand deeply, plan explicitly, generate last. It is narrower in scope than DeepIP and less collaborative in feel than Solve, but if your biggest problem is "I am not sure the AI understood the invention before it started writing," eety is built around exactly that fear.

This is not about which model is "smarter." GPT-4, Gemini, Claude: the underlying models available to patent AI tools are largely the same generation of technology available to the consumer tools. Probably literally the same models, in many cases.

The difference is the architecture around the model. Think of it like plumbing. The water pressure is the same in every building. What determines whether you get a hot shower or a cold surprise is the pipe design.

I want to be careful here, because I am building a company that competes with "just use ChatGPT"; which means I have an obvious incentive to tell you ChatGPT is useless for patents. That would be dishonest. So let me say the opposite of that.

ChatGPT and Gemini are excellent for a surprising number of things in patent work:

In many teams, ChatGPT will remain a very useful assistant layer alongside a purpose-built tool. There is no rule that says you cannot use both. The senior attorney I had dinner with? I would tell him to keep using it, just not as his production drafting system.

The word "assistant" is doing real work in that last sentence. An assistant layer is not the same as a production system. And once you have produced a patent application that someone may sue you for, that distinction matters enormously.

I started building eety.ai because I watched smart, experienced patent attorneys do something that made me slightly uncomfortable: they were trusting general AI tools with legal-technical work that carries professional liability, client confidentiality obligations, and inventorship consequences… and then discovering the problems after the draft was done, not before.

The problem was never the model. The problem was always the absence of structure around the model.

DeepIP and Solve Intelligence both deserve genuine credit for proving that this is now a real software category. Not a gimmick, not a buzzword, but an actual space where workflow intelligence compounds on top of language generation to produce something more defensible than a chat window full of good-sounding text.

Each tool makes a different bet about where the leverage is. DeepIP bets on breadth and Word-native depth. Solve bets on collaborative, browser-native copilot experience. eety bets on the understanding layer; on the belief that the cheapest mistake is the one prevented before the plan is approved, before page one is generated, before the ghost in the specification becomes anyone's problem.

All three bets are reasonable. The underlying conviction is shared.

And once you see it that way… the dinner conversation gets a lot more interesting. :)

P.S. I went back to that senior attorney three months after our dinner. He had found a ghost in one of his applications that a junior associate caught in review. Not a catastrophic ghost. A subtle one. He did not connect it to our conversation, but I did. I did not say anything either. Some lessons are better when they arrive on their own timeline… :(

Upload a real disclosure. Watch the gap-finding questions. Review the plan. Then decide if you trust the output more.