The "Brain" Engine

The Problem Every Patent Attorney Knows

Drafting a quality patent application is not a writing exercise : it is a comprehension exercise. An attorney who doesn't deeply understand what makes an invention novel cannot write claims that defend it. Yet the modern patent practice runs at brutal speed: inventors drop a three-page disclosure on your desk and expect a filing-ready draft in days. There is rarely time for the kind of deep technical interrogation that separates a good patent from a great one.

Traditional tools don't help here. They take whatever text you give them and begin writing immediately : recycling your input back at you with marginally better grammar. The result is a document that reads like a patent but lacks genuine structural fidelity to the invention. Claims are drafted without a true model of what the core innovation is. Paragraphs repeat across sections. Novelty arguments are generic. Examiners notice.

Why Generic AI Tools Make This Worse

- ✕Context window stuffing: Most tools dump the entire disclosure into a long prompt and hope the model connects the dots : but LLMs are pattern-matchers, not patent engineers. They will produce plausible-sounding text that confuses feature descriptions with innovation claims.

- ✕No persistent knowledge: Each drafting session starts from scratch. There is no accumulated understanding of the invention. The model doesn't remember what it learned in the claims section when it writes the detailed description.

- ✕No novelty grounding: Generic tools don't reason about novelty. They draft. An attorney is left to manually verify that every output is actually anchored to what makes this invention patentably distinct : which defeats the point of using AI at all.

Why The Brain Engine Changes Everything

Before eety.ai writes a single word of legal text, it does something no other tool does: it builds a structured, 15-point model of the invention itself. Think of it as the internal mental model a great patent attorney constructs after a thorough client interview : but made explicit, persistent, and machine-readable.

This model : the Brain : captures fields such as the core Technical Problem being solved, the specific Solution Mechanism, the step-by-step Interaction Trace of how components work together, the Novelty Assessment relative to the prior art, and the broader Claim Strategy. Every section of the final draft is generated by consulting this shared model, not by re-interpreting raw input text. The result is a document where the claims, the abstract, and the detailed description are all structurally consistent with each other and with the actual invention.

In practice: Attorneys report that the Brain surface alone serves as a valuable invention disclosure review tool : catching gaps in the inventor's narrative before drafting even begins. It turns a 20-minute skimming exercise into a structured understanding artifact the attorney can stand behind.

Confidence Scoring & Guards

The Problem Every Patent Attorney Knows

The most dangerous moment in patent drafting isn't when you don't know something : it's when the AI thinks it knows something and charges ahead anyway. An incomplete disclosure with a missing mechanism, an ambiguous claim scope, or a poorly articulated technical problem should all be red flags that halt the process. Instead, most tools treat every input as sufficient and produce output that appears polished but is structurally unsound.

Attorneys then spend hours reviewing generated text only to discover foundational gaps mid-review : after significant time has already been invested. The rework is expensive, the frustration is real, and the attorney is left wondering whether the AI saved or wasted their time.

Why Generic AI Tools Make This Worse

- ✕Always confident, never honest: Every AI assistant presents its output with the same polished tone, whether it has deep context or is hallucinating a mechanism. There is no signal to the attorney about how much the model actually understood.

- ✕No drafting gates: Tools proceed straight from input to output with no intermediary checkpoint. There is no moment where the system says "I don't understand the solution mechanism well enough to draft claims around it."

- ✕No dimension-level visibility: Even if a tool flags low confidence globally, it cannot tell you whether the issue is in the technical description, the novelty, the claim structure, or all three : so attorneys can't triage efficiently.

Why Confidence Scoring Changes Everything

eety.ai tracks confidence across all 15 dimensions of the Brain : individually. Each field is scored Green, Amber, or Red based on the quality and completeness of available information. If any critical dimension falls into Amber or Red, eety.ai architecturally refuses to proceed to drafting and instead redirects the attorney to resolve the specific gap first.

This is not a warning dialog. It is a hard gate : the same way a surgeon won't proceed without the right imaging. It forces a discipline that great patent attorneys already follow intuitively but that AI has historically abandoned in pursuit of appearing productive.

In practice: Attorneys using eety.ai find that the Amber/Red flags consistently surface the exact questions they would have eventually asked the inventor anyway : but surface them before drafting begins, not after two days of revision.

Inventor Interview Loop

The Problem Every Patent Attorney Knows

The inventor interview is the single most important input to a great patent application : and most attorneys never have enough time to do it properly. Inventors are engineers, not lawyers. They describe what their product does, not what makes it technically novel over the prior art. Getting to the exact mechanism, the edge case that makes it work, or the specific sequence of steps that constitutes the inventive contribution requires targeted, expert questioning.

Most practices are forced to work with a 30-minute call and a three-page form. The result is that attorneys draft around gaps : inferring what the inventor meant, guessing at mechanisms, and hoping the examiner doesn't probe too deeply into the same unknowns.

Why Generic AI Tools Make This Worse

- ✕No active elicitation: Standard tools are passive : they draft from what they're given. They do not identify what's missing and ask for it. The burden of knowing what to provide falls entirely on the attorney.

- ✕Generic questions, not targeted ones: If a tool does prompt for clarification, it asks vague things like "Can you describe your invention?" : not "In step 3, how does the calibration module reconcile conflicting sensor readings if the primary signal is degraded?"

- ✕No loop: There is no iterative interview dynamic. One round of questions means one set of answers : even though real inventor interviews are back-and-forth conversations that unfold over multiple exchanges.

Why The Inventor Interview Loop Changes Everything

When eety.ai detects gaps in the Brain : a missing mechanism, an unclear component interaction, an unresolved novelty question : it doesn't guess. It generates 3 to 5 precise, engineer-perspective questions targeted at exactly those gaps. These questions are delivered one at a time in the chat interface, creating an organic, conversation-like flow.

The questions are not generic. They are derived from the specific missing fields in the Brain model. If the system doesn't understand how two components communicate, it asks about that specifically. If the novelty dimension is under-specified, it probes the technical differentiation from competing approaches. Each answer feeds directly back into the Brain, refining the model before any drafting begins.

In practice: Many attorneys forward eety.ai's questions directly to their inventor client via email : using the AI's targeted queries as a structured follow-up that replaces a second call and accelerates the intake process significantly.

Surgical Delta Refinement

The Problem Every Patent Attorney Knows

Patent prosecution is iterative by nature. You gather information in waves : first the disclosure, then the inventor Q&A, then a prior art search result, then a client correction. Each new piece of information should sharpen an existing understanding, not replace it. But with typical AI tools, every new input risks wiping what was previously established. There is no safe way to deepen the model's understanding without risking that a good answer unravels an earlier one.

Attorneys working with LLM tools frequently report the "erasing good work" problem : where adding more context causes the model to restructure parts of the application that were already dialed in, creating fresh inconsistencies in sections that were previously reviewed and approved.

Why Generic AI Tools Make This Worse

- ✕Context rewriting: When you give a standard LLM new information, its next response implicitly reweights everything : including the parts it had right before. There's no concept of "only update what changed."

- ✕No audit trail: Standard tools don't show you what changed between one model state and the next. You can't see what a new answer shifted, which means you can't verify that previously confirmed elements are intact.

- ✕Fragile accumulated knowledge: The more context a session accumulates, the more unstable general LLM outputs become. Contradictions creep in silently, and the attorney is the last line of defense against them.

Why Surgical Delta Refinement Changes Everything

eety.ai uses a "Chief Architect" pattern for all knowledge updates. When new information arrives : whether from an inventor answer, a document upload, or a manual correction : the system computes a precise delta: only the fields in the Brain that should change, given the new input. This delta is then deep-merged non-destructively into the existing model.

Every update produces an explicit change summary shown directly in the chat : telling the attorney exactly which Brain fields were modified and how. Previously confirmed fields that were not affected by the new input remain exactly as they were. The understanding grows like a legal brief with tracked changes : controlled, visible, and reversible.

In practice: Attorneys can hand an updated inventor memo to eety.ai midway through a project and trust that only the relevant Brain fields will be updated : without having to re-review sections of the model that were already validated.

Plan-Before-You-Draft

The Problem Every Patent Attorney Knows

One of the biggest sources of rework in AI-assisted drafting is misalignment between what the attorney intended and what the tool produced. An attorney asks for "claims covering the server-side caching mechanism" and receives 15 claims that include a mobile client implementation that was expressly left out of scope. The output looks impressive on first glance. It only falls apart on careful review : which is exactly the most expensive moment for it to fail.

The root cause is simple: tools go straight from instruction to execution without any collaborative planning phase. There is no moment where the attorney can review the drafting strategy before it runs. By the time you see what the AI intended, it has already committed.

Why Generic AI Tools Make This Worse

- ✕Monolithic output: Most tools generate the entire document in a single pass. If the strategy was wrong at step one, every subsequent section inherits that error : and you don't find out until you've reviewed 20 pages.

- ✕No attorney review gate: There is no checkpoint between "the AI decided what to draft" and "the AI drafted it." Attorneys are passengers, not partners, in the generation process.

- ✕Opaque reasoning: When a tool produces an unexpected output, there is no audit trail showing what strategy it followed. You cannot understand why it made the choices it made, which means you cannot efficiently correct it.

Why Plan-Before-You-Draft Changes Everything

Before eety.ai executes any drafting operation, it presents the attorney with a structured Draft Plan : a numbered list of discrete steps it proposes to take. For example: Step 1: Draft independent claim 1 covering the server-side caching mechanism. Step 2: Draft three dependent claims refining the eviction policy. Step 3: Draft the Abstract. Each step is visible, reviewable, and editable before the first word of legal text is generated.

The attorney approves : or adjusts : the plan. Only then does the background execution begin. This single checkpoint eliminates the most common source of AI drafting rework: misaligned scope assumptions that weren't caught until page 15.

In practice: Attorneys frequently modify 1–2 plan steps before approving : adjusting claim count, narrowing section scope, or reordering priority. This micro-review takes under two minutes and routinely prevents hours of post-draft revision.

Visual Cascade Pipeline

The Problem Every Patent Attorney Knows

Legal practice operates on accountability. An attorney who signs a patent application is personally responsible for its content. Using an AI tool that operates as a black box : where text appears from a spinner with no explanation of how it got there : is not just uncomfortable; it is professionally incompatible with the obligation to exercise independent legal judgment.

Attorneys who cannot explain why a claim was drafted a particular way cannot defend it in prosecution, cannot explain their strategy to clients, and cannot build the kind of professional intuition that makes AI a force-multiplier rather than a crutch.

Why Generic AI Tools Make This Worse

- ✕Spinner-to-output generation: You submit a prompt, a spinner runs, and text appears. There is no narrative of what reasoning occurred. The attorney is handed a product, not a process.

- ✕No traceability: If a claim introduces an unexpected limitation, there is no log of which input drove that decision. Tracing errors is manual, tedious, and often impossible.

- ✕Trust without verification: Attorneys are forced to either trust the output wholesale : which is legally irresponsible : or re-analyze every line from scratch : which eliminates the efficiency benefit of AI entirely.

Why The Visual Cascade Pipeline Changes Everything

As each stage of a drafting chain completes, eety.ai writes a structured narrative message into the chat : explaining what it learned, what decisions it made, and why. When it drafts Claim 1, it summarizes the specific Brain fields it drew on and the novelty rationale it prioritized. When it transitions from claims to specification, it notes which dependencies it maintained.

This creates an auditable reasoning trail : a sequential narrative the attorney can read alongside the draft. You can see exactly what deductive leaps the model made. You know when to agree with them and when to redirect.

In practice: Senior attorneys report that the cascade narrative is often as valuable as the draft itself : giving them the reasoning context to quickly edit claims with confidence, rather than re-deriving intent from the output text alone.

Rotated Activity Stream

The Problem Every Patent Attorney Knows

Patent drafting jobs are not instant. A multi-section application can take 3–5 minutes of intensive AI processing. During that time, most tools show a generic spinner or a "Generating…" message. For a busy attorney, that is 3–5 minutes of uncertainty: Is it stuck? Has it encountered an error? Is it going down the right path? The absence of feedback creates a kind of cognitive dead zone where trust erodes and anxiety builds.

More practically, attorneys can't make productive use of a wait state if they don't know how long it will last or what's happening. They either stare at a spinner or tab away and miss key moments when intervention would be valuable.

Why Generic AI Tools Make This Worse

- ✕Generic progress indicators: A "Generating…" spinner communicates nothing about what is happening, how far along the process is, or what specific step is being executed.

- ✕No sub-step visibility: Multi-step drafting processes have internal stages : understanding parsing, claim structuring, dependency analysis : none of which are surfaced to the user in real time.

- ✕No early anomaly detection: If the system encounters an unexpected edge case mid-process, attorneys have no indicator that something unusual is happening until the final broken output appears.

Why The Rotated Activity Stream Changes Everything

While processing runs in the background, eety.ai displays a live, sub-second updated activity stream showing exactly what cognitive step is being executed at any given moment. Messages rotate through in real time: "Analyzing core novelty dimensions…", "Structuring independent claim hierarchy…", "Checking backward references for consistency…"

This isn't decorative. Each status reflects an actual internal processing stage, giving the attorney a genuine window into the system's current operation. Long jobs feel purposeful, not frozen. And the rotated stream acts as an early indicator : if you're seeing "Resolving ambiguous interaction trace…" for an unusually long time, that's a signal the Brain has something to surface.

In practice: Attorneys report significantly higher comfort levels during long jobs when the activity stream is running. The visibility transforms waiting from frustration into active observation.

Automated Patent Drawings

The Problem Every Patent Attorney Knows

Patent drawings are non-negotiable for hardware, mechanical, and system inventions : and they are a consistent bottleneck. Attorneys either rely on inventors who struggle to produce USPTO-compatible illustrations on tight timelines, or they engage technical illustrators who add cost, coordination overhead, and delay. Neither solution scales with a high-volume drafting practice.

The irony is that the information needed to produce drawings is exactly what's already in the disclosure. The architectural diagram, the system block structure, the component hierarchy : it's all described in the text. The gap is purely execution: translating described structure into a properly formatted visual.

Why Generic AI Tools Make This Worse

- ✕No drawing support at all: Most AI patent tools are text-only. They generate the specification but leave drawings entirely to the attorney, creating a two-system workflow where the AI output and the visuals are developed in isolation.

- ✕Canvas-editor approaches: Some tools offer a drawing canvas where attorneys manually build diagrams. This is simply a different form of manual labor : faster than a blank canvas, but still requiring significant hands-on time from a licensed professional.

- ✕No figure-claim alignment: Even when drawings are eventually produced, there is no automated alignment between figure labels, claim element references, and the detailed description. That reconciliation falls back on the attorney.

Why Automated Patent Drawings Change Everything

eety.ai translates the Brain's structured invention model directly into figure layouts. It identifies the key system components, determines the logical hierarchy between them, and generates stylized, USPTO-compatible block diagrams that visually represent the architecture described in the specification. No canvas. No illustrator. No separate workflow.

The figures are designed specifically to complement the claims : the reference numerals, component labels, and structural relationships all emerge from the same Brain that drives the text. This means drawings and specification are naturally consistent from the moment of generation, eliminating a class of amendment triggers that commonly arise from figure-specification mismatches.

In practice: Attorneys report that eety.ai drawings are routinely usable as filing-quality illustrations with minor tweaks, saving several hours of illustrator coordination and review per application.

SVG Figure Editing

The Problem Every Patent Attorney Knows

AI-generated drawings are a tremendous starting point : but they are rarely perfect on the first pass. An arrow points the wrong direction. A component label is missing from one figure but present in another. A box needs to be broken into two distinct elements to better reflect the system architecture. These are small, precise corrections that require direct manipulation of the image, not a new text prompt.

The problem is that most AI-generated images are raster files : JPEGs or PNGs : that cannot be edited without destructive image manipulation. The attorney is stuck making a choice between accepting an imperfect figure, re-generating from scratch with a modified prompt (which may introduce new errors), or exporting to a separate illustration tool and re-integrating manually.

Why Generic AI Tools Make This Worse

- ✕Raster-only output: PNG and JPEG figures cannot be meaningfully edited. Resizing degrades quality, and element-level changes require destructive pixel manipulation or a complete redraw.

- ✕Prompt iteration as the only fix: When a drawing is wrong, tools expect you to describe the correction in words and regenerate : which may fix one issue while introducing two others. Precision editing via text prompts is hit-or-miss at best.

- ✕Broken workflow continuity: Any correction that requires an external tool : Illustrator, Inkscape, Visio : breaks the drafting workflow completely, requiring file export, external editing, and manual re-import.

Why SVG Figure Editing Changes Everything

eety.ai generates figures as vector SVGs : fully editable structural files where every element (box, arrow, label, connector) is a discrete, selectable object. When a figure needs adjustment, the attorney opens the built-in SVG editor directly within the drafting interface, selects the element, and makes the precise change : without leaving the application and without regenerating from scratch.

This means the correction loop for drawings is as fast and precise as the correction loop for text. Add a label, move a component, split a block, adjust an arrow : all in the same environment, at vector quality, with no export-import friction. The drawing and the specification evolve together.

In practice: Attorneys routinely make 3–5 surgical figure edits per application directly in eety.ai's SVG editor in under 10 minutes : work that previously required scheduling a session with an outside illustrator.

Style DNA Extraction

The Problem Every Patent Attorney Knows

Every experienced patent attorney has a voice. A particular way of introducing embodiments. A preferred hedging structure for independent claims. A consistent sentence rhythm in the detailed description. This style is not decoration : it reflects years of craft, specific examiner feedback, client preference, and jurisdictional calibration. It is a professional asset that takes a decade to develop.

When AI tools draft in a generic legal register, they produce text that reads like a template, not like you. Clients notice. Partners notice. And the attorney faces a choice between accepting generic output or spending hours rewriting it into their own voice : which erodes the efficiency case for using AI at all.

Why Generic AI Tools Make This Worse

- ✕One-size-fits-all register: Generic tools produce a uniform legal tone calibrated to no one in particular. The output sounds like a competent paralegal, not like the attorney whose reputation is attached to the filing.

- ✕Superficial style settings: Some tools offer "formal" or "concise" toggles. These adjust surface-level tone without ever capturing the specific structural and semantic patterns that define an individual attorney's professional voice.

- ✕Jurisdictional unawareness: The hedging conventions, claim preamble structures, and embodiment disclosure patterns that work for a US continuation practice are different from those optimized for EPO examination. Generic tools don't distinguish between them.

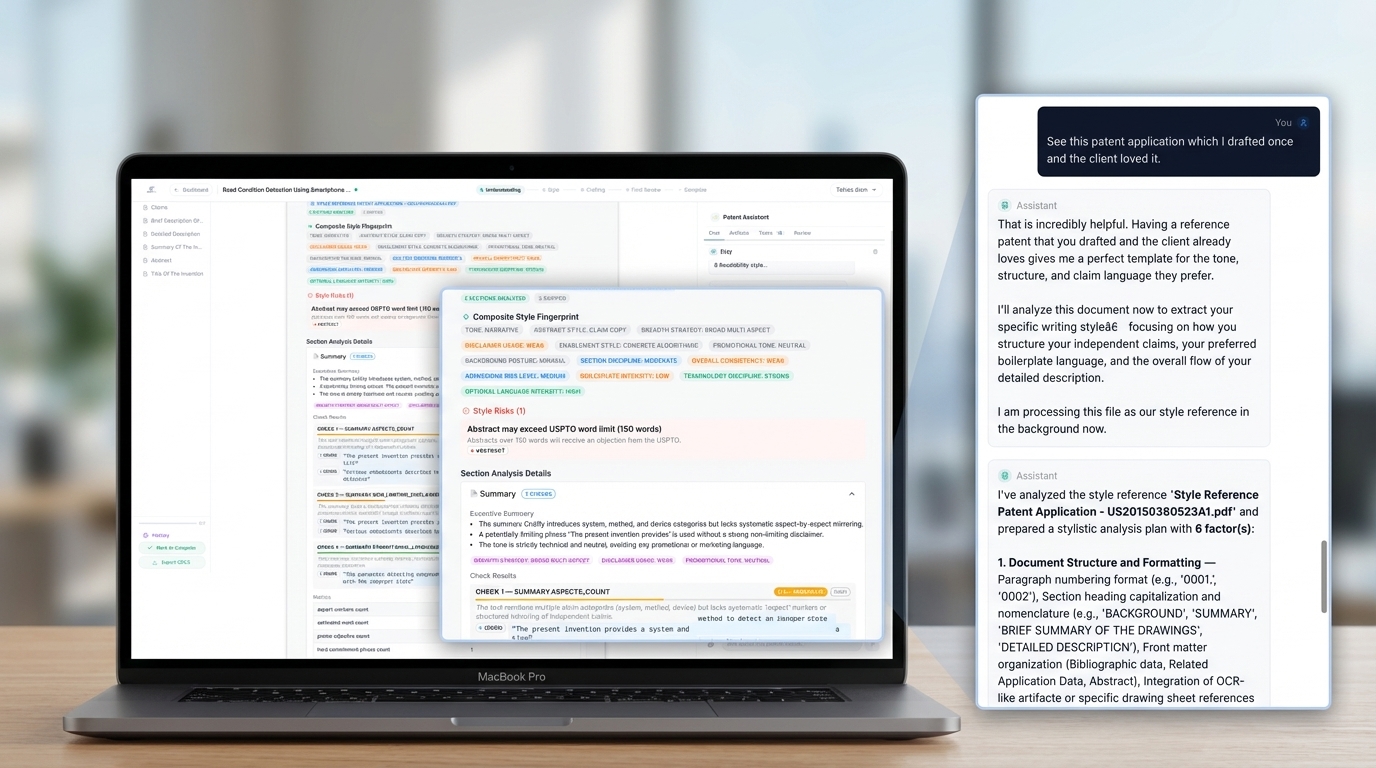

Why Style DNA Extraction Changes Everything

eety.ai's Style DNA system analyzes a set of reference patent applications written by the attorney : or sourced from their firm's existing portfolio : and algorithmically extracts a precise stylistic fingerprint. This is not a surface-level tone analysis. It captures hedging semantics, embodiment disclosure patterns, claim preamble structures, antecedent basis conventions, and sentence cadence across sections.

Every subsequent draft is generated with this profile active : meaning the output doesn't just sound like a competent patent application, it sounds like your patent application. Partners reviewing AI-assisted work report that it requires significantly less stylistic revision when Style DNA is enabled.

In practice: Attorneys who enable Style DNA on a set of 5–10 reference filings find that the AI output sits comfortably within their established drafting voice from the first generation : reducing stylistic editing time by the majority of that revision cycle.

Team Workspace and Role-Based Collaboration

The Problem Every Patent Attorney Knows

Patent prosecution is almost never a solo exercise. Senior attorneys review claims. Paralegals track status. Associates draft specification sections. Technical advisors weigh in on the science. Yet virtually every AI patent drafting tool is designed as a single-user workspace, with no mechanism for sharing, assigning, or co-reviewing a matter within the same system.

The result is fragmented workflows: drafts emailed back and forth, comments pasted into separate Word documents, and no single authoritative version of the application until someone manually consolidates everything at the end.

Why Generic AI Tools Make This Worse

- ✕Single-user silos: Every AI tool issues one login and one workspace. There is no concept of a shared matter, so team review always happens outside the tool.

- ✕No access control: Even if files can be shared, there is no mechanism to distinguish between who can edit and who can only view, creating version control chaos.

- ✕No attribution: When multiple people touch an AI-generated draft, there is no record of who made which change, which matters for professional responsibility and billing.

Why Team Workspace Changes Everything

eety.ai treats collaboration as a first-class feature. Workspace owners can invite colleagues by email with a single click. Each invitee receives a branded invitation and creates their account directly linked to the shared workspace. Matters can then be assigned to any team member with either Editor or Viewer access.

Every matter in the dashboard is clearly labeled as either Owned or Shared by, with the name of the person who assigned it. There is never any ambiguity about which version is authoritative or who is responsible for which draft.

In practice: Firms use eety.ai's workspace feature to assign matters to associates during the drafting phase and then transfer ownership to the supervising partner for review, all without leaving the platform or emailing a single file.

Waterproof Audit Trail

The Problem Every Patent Attorney Knows

Patent practice carries significant professional responsibility. When an application is filed, an attorney's name is on it. If questions arise later about whether a particular claim was intentionally drafted a certain way, whether a prior art reference was considered, or whether an AI-generated section was reviewed and approved, there must be a record. Currently, there is none.

The absence of a traceable change history in AI-assisted drafting creates real liability exposure. Firms cannot demonstrate diligence, cannot reconstruct decision rationale during prosecution, and cannot protect themselves if an AI-generated error is later contested.

Why Generic AI Tools Make This Worse

- ✕No version history: Most tools produce a current draft and nothing else. There is no record of what the draft looked like before the last change, who made it, or why.

- ✕AI actions are invisible: When AI generates or modifies text, that action is not logged anywhere. You cannot distinguish AI edits from human edits after the fact.

- ✕No actor identity: In multi-user environments, all edits are attributed to the account owner, making team accountability impossible.

Why the Audit Trail Changes Everything

eety.ai maintains a comprehensive, tamper-evident audit log for every matter. Every AI drafting step is recorded with a timestamp, step description, and the specific Brain fields it consulted. Every human edit is recorded with the editor's identity and the exact content delta. Both streams are merged into a single chronological timeline accessible from within the matter.

This timeline is not a debugging tool for engineers. It is a professional record. Attorneys can review it to verify that every section was touched by a licensed professional before filing, and to reconstruct the reasoning behind any drafting decision.

In practice: Firms that use eety.ai for malpractice-sensitive matters treat the audit trail as part of the file, exporting it alongside the application as evidence of diligent AI supervision.

9 Filing Templates Across 4 Jurisdictions

The Problem Every Patent Attorney Knows

A US non-provisional application looks nothing like an EPO application or a PCT filing. The section structure differs. The claim format conventions differ. The hedging language required to satisfy an EPO examiner is different from what a USPTO examiner expects. The paragraph numbering requirements, embodiment disclosure patterns, and abstract conventions all vary by jurisdiction. Attorneys who practice internationally maintain separate templates, format guides, and checklists for each office.

Generic AI tools ignore all of this. They produce a single format of output regardless of where the application will be filed, leaving attorneys to manually reformat, restructure, and re-check every cross-jurisdictional filing.

Why Generic AI Tools Make This Worse

- ✕US-only output: Virtually every AI patent tool is calibrated to USPTO practice. EPO-format two-part claims, PCR summary requirements, and UKIPO statement-of-invention conventions are simply absent.

- ✕No formatting enforcement: Even tools that claim multi-jurisdiction support produce plain text and leave formatting to the attorney. There is no structural enforcement of jurisdiction-specific rules.

- ✕Reformat on export: The attorney must remember which jurisdiction they are working in and manually apply the correct conventions before filing, reintroducing exactly the errors AI was supposed to prevent.

Why Multi-Jurisdiction Templates Change Everything

eety.ai ships with nine filing templates spanning four patent offices: US Standard, US Continuation, US CIP, US Provisional, EPO One-Part Form, EPO Two-Part Form, PCT Standard, PCT Software, GB Standard, and GB with Statement of Invention. The attorney selects the target jurisdiction at matter creation, and every downstream action automatically adapts: section structure, claim conventions, formatting rules, drafting language, and export formatting are all jurisdiction-aware from the first keystroke.

This is not a cosmetic difference. The drafting prompts sent to the AI, the style checklist rules applied, and the section heading conventions enforced all change based on the selected template. The attorney does not need to remember the rules; the system enforces them.

In practice: Attorneys filing the same invention in both the US and Europe create two separate eety.ai matters with different templates, then let the AI adapt the drafting language and structure for each office without any manual reformatting.

Continuation and CIP Intelligence

The Problem Every Patent Attorney Knows

Continuation and CIP practice is one of the highest-stakes and most error-prone areas of US patent prosecution. The cross-reference to related applications must cite the exact parent application number and filing date, with no interpolation. The scope of the continuation must be analyzed against the parent to identify what new subject matter can be claimed and what is already disclosed. Claim differentiation from the parent application must be deliberate, not accidental.

Generic AI tools do not understand any of this. Feed them a parent patent and ask for a continuation, and they will produce a plausible-sounding application that may cite a hallucinated application number and claim scope that inadvertently duplicates the parent's already-patented claims.

Why Generic AI Tools Make This Worse

- ✕Hallucinated cross-references: Standard LLMs will invent application numbers and filing dates when asked to draft a continuation, creating documents that cannot be filed without manual correction of every citation.

- ✕No scope gap analysis: The critical step in continuation drafting is identifying what the parent application has already claimed and what new ground the continuation can legitimately cover. Generic tools skip this entirely.

- ✕No parent metadata extraction: Accurate continuation drafting requires reliably extracting the filing date, application number, and publication number from the parent. Most tools guess rather than extract.

Why Continuation Intelligence Changes Everything

eety.ai's dedicated continuation workflow begins with structured extraction of the parent application's metadata, pulling filing date, application number, and patent number directly from the uploaded parent document with no interpolation. These values are locked into the cross-reference section verbatim before any drafting begins.

The system then performs a scope gap analysis: comparing the parent application's claimed subject matter against the new disclosure to identify which aspects of the invention are not yet claimed and are eligible for continuation coverage. The drafting workflow is sequenced to cover this new ground first, with explicit differentiation from the parent's claim scope built into the plan.

In practice: Attorneys handling high-volume continuation portfolios use eety.ai to draft continuation applications in hours rather than days, with confidence that cross-references are accurate and claim scope is deliberately differentiated from the parent.

Style Preference Compliance Checker

The Problem Every Patent Attorney Knows

Every patent firm has drafting conventions that go beyond basic legal requirements. Specific claim preamble structures. Preferred antecedent basis handling. Particular embodiment disclosure sequences. Paragraph numbering formats. These preferences accumulate over years and represent the institutional knowledge of a practice group. When AI drafts without understanding these conventions, the output requires extensive stylistic revision before it is firm-ready.

Style compliance is not cosmetic. Inconsistent claim preambles create prosecution risk. Missing antecedent basis creates written description issues. These are not preferences to be corrected at leisure; they are drafting discipline that affects the quality of the granted patent.

Why Generic AI Tools Make This Worse

- ✕No firm-level standards awareness: Generic tools don't know your firm's conventions and have no mechanism for you to define them. Every output must be manually checked against every style rule.

- ✕No scored feedback: Even when attorneys review AI output for style, the review is unstructured. There is no systematic report of which rules passed, which failed, and where the violations occur in the document.

- ✕Style and substance conflated: Attorneys end up reviewing style and legal substance simultaneously during the same pass, increasing cognitive load and the chance that something is missed.

Why the Style Compliance Checker Changes Everything

eety.ai's Style Preference Compliance Checker runs a structured, multi-point review of a completed draft against a configurable firm-level checklist. The checklist covers paragraph numbering convention, antecedent basis completeness, claim preamble structure, hedging language consistency, embodiment disclosure format, and cross-reference accuracy.

Each rule produces a pass, warning, or fail result with a specific citation to the relevant passage in the draft. The attorney receives a scored report that separates style issues from legal issues, making the revision cycle faster and more systematic than any manual review process.

In practice: Supervising partners at firms using eety.ai report that associates' AI-assisted drafts arrive with the style checklist report already completed, reducing partner review time by a significant portion of the stylistic correction cycle.

Section-Level Final Review and Annotation

The Problem Every Patent Attorney Knows

Pre-filing review of a patent application is one of the highest-leverage tasks in prosecution, and one of the most time-consuming. A thorough final review requires checking that claims are adequately supported, that the specification enables every claimed embodiment, that antecedent basis is correct throughout, and that no section introduces new matter not present in the original disclosure. Doing this manually across a complex application requires hours.

When AI tools generate the draft, attorneys are often unsure which parts of the output require especially close scrutiny. Without structured guidance, final review becomes a full re-read rather than a targeted inspection of high-risk passages.

Why Generic AI Tools Make This Worse

- ✕No built-in review stage: Most tools end at draft generation. There is no systematic review step, so final QA is entirely manual and unscaffolded.

- ✕No section-level focus: When tools do offer a review mode, it operates on the full document at once, producing a generic summary that is rarely actionable at the level of specificity needed before filing.

- ✕No annotation workflow: Review findings cannot be captured in context within the document. Attorneys write notes elsewhere, then manually cross-reference them during revision.

Why Section-Level Review Changes Everything

eety.ai's Final Review system analyzes each section of the application independently and produces a structured list of annotations flagging specific issues: claims lacking specification support, antecedent basis errors with the offending terms identified, embodiments described in the specification but not reflected in any claim, and potential enablement concerns in technically complex sections.

Each annotation is attached to a specific passage and can be accepted as an action item, marked as reviewed and no change needed, or dismissed. The attorney works through a structured QA queue rather than a blank-page re-read. The result is a faster, more systematic, and more defensible pre-filing review than any unassisted process can provide.

In practice: Attorneys using eety.ai's final review stage report catching claim support issues and antecedent basis errors that would otherwise require a costly office action response to resolve after filing.

DOCX Export with Embedded Drawings

The Problem Every Patent Attorney Knows

The final step in any AI-assisted drafting workflow is getting the output into the format that firms actually use for client review, partner sign-off, and filing preparation. That format is always Word. When AI-generated text and separately produced figures must be manually assembled in Word, considerable time is spent on reformatting, figure insertion, heading structure, and pagination. This is not legal work. It is document production work, and it has no place in an attorney's billable day.

When drawings are produced by a separate tool and the specification by another, the integration is a manual step that frequently introduces inconsistencies between figure references in the text and the figures themselves.

Why Generic AI Tools Make This Worse

- ✕Text-only output: Most tools export plain text or basic HTML. Attorneys must manually create the Word document, apply heading styles, insert figures, and format the claims section.

- ✕Figures not integrated: Even when drawings are produced within a platform, they are typically exported as separate image files, leaving figure embedding and numbering to the attorney.

- ✕No jurisdiction-formatted export: The Word output, even when available, is not pre-formatted for the target jurisdiction. US and EPO documents have different structural requirements that must be applied manually.

Why Structured DOCX Export Changes Everything

eety.ai's one-click DOCX export produces a properly structured Word document that includes the full application text formatted according to the selected filing jurisdiction, all generated patent figures embedded at the correct positions with automatic figure number cross-references, and the correct heading hierarchy for the target patent office.

The figures in the exported document are the same figures generated by the Brain, positioned adjacent to their first reference in the specification. Reference numerals in the text match the labels in the drawings because both are generated from the same underlying model. There is no integration step and no figure-reference reconciliation required.

In practice: Attorneys report that eety.ai's DOCX export is directly usable for client review and partner sign-off with only minor formatting adjustments, eliminating the half-day of document assembly that typically follows any AI-assisted drafting session.

Multi-File Disclosure Intelligence

The Problem Every Patent Attorney Knows

Real invention disclosures are never a single clean document. Inventors submit PowerPoint decks, CAD screenshots, email threads, prototype photographs, technical specifications, prior art excerpts, and research notes, often in multiple versions that partially conflict with each other. An attorney must reconcile all of this into a coherent technical narrative before any drafting can begin.

The reconciliation step is typically invisible and unbilled, but it can take hours on a complex technical matter. And when it goes wrong, because an earlier document mentioned one embodiment and a later memo quietly revised it, the resulting application reflects an understanding that is internally inconsistent from the start.

Why Generic AI Tools Make This Worse

- ✕Single-file assumption: Most AI tools are designed around a single input document. Multi-file inventions require the attorney to manually consolidate before the AI sees anything.

- ✕Silent averaging of conflicts: When tools do accept multiple files, they blend the content without flagging contradictions. The attorney receives a confident output built on an internally inconsistent foundation.

- ✕No document-type awareness: A photograph of a prototype, a system architecture diagram, and a written specification each contain different types of information that should be interpreted differently. Generic tools treat all inputs identically.

Why Multi-File Intelligence Changes Everything

eety.ai accepts simultaneous uploads of PDFs, Word documents, images, technical specifications, and research memos. Each file is processed with awareness of its type and role: technical specifications are mined for mechanism detail, images are analyzed for structural relationships, prior art documents are noted as context rather than as invention description.

Critically, when information across files conflicts, eety.ai surfaces the conflict explicitly in the chat rather than silently resolving it. The attorney decides which version of the inventive detail is authoritative before that decision propagates into the Brain and then into the draft. The reconciliation step becomes explicit, logged, and attorney-controlled rather than hidden inside an LLM's averaging behavior.

In practice: Attorneys handling complex hardware inventions with multiple inventor contributors upload all source materials at once. eety.ai flags contradictions between files, the attorney resolves them in the chat, and the Brain is built on a reconciled foundation rather than a conflated one.